OpenAI becomes an Nvidia shop.

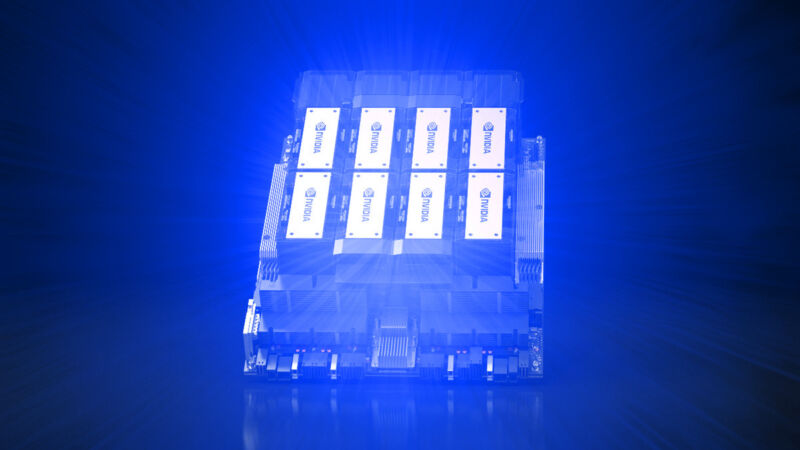

- Nvidia’s deal with OpenAI will provide it with $100bn of capital to spend on datacentres, almost all of which will come back to Nvidia in the form of chip sales, dealing a blow to the fledgling competition.

- This deal is really a purchase agreement where OpenAI pays in equity for Nvidia’s chips, but there is also a commitment to co-optimise technology and roadmaps.

- This means that OpenAI’s technology will run better on Nvidia than on anyone else’s and that OpenAI will prefer to use Nvidia as opposed to anyone else for both training and presumably, inference.

- This is a blow for Nvidia’s competitors, such as AMD, and I suspect that this also means the end of OpenAI’s house chip development for now.

- OpenAI was recently on stage at an AMD AI event, extoling the qualities of the MI400 series, but how much OpenAI will now spend with AMD is open to question.

- Given the optimisation for Nvidia, this now may be much less in the long run.

- When the deal is signed, Nvidia will put in $10bn at the prevailing valuation of OpenAI, and each time 1GW of compute comes online, Nvidia will invest another $10bn up until the point that $100bn is reached.

- The first GW of compute is expected to come online in H2 2026 and will be built on the forthcoming Vera Rubin system that I expect to be formally launched at GTC in 2026.

- The other question that is currently going unanswered is power, as all of this infrastructure will require the capacity of 10 large nuclear power stations to run.

- This equates to roughly 0.7% of the entire power generation capacity of the USA (1.3TW), and given how long it takes to permit and build electricity generation, I think that this is going to be a problem if the build-out continues at this pace.

- The only carbon-free option here is nuclear power, which is going through a renaissance and where I have a position in the fuel cycle.

- The language of the release is revealing in that it is clear that there is enough wiggle room for either Nvidia or OpenAI to back out of the deal if market conditions change.

- This makes this arrangement effectively a frame order which defines quantity and maybe price, but does not guarantee revenues for Nvidia until the specific orders are placed.

- It also means that if there is a correction or a downturn or OpenAI gets into some form of financial difficulty, then the conveyor belt of cash is likely to grind to a halt.

- I suspect that something like this is likely, as there is little doubt in my mind that datacentres are currently being overbuilt, meaning that a correction is inevitable.

- Crucially, there is already a lot of demand for data centre capacity such that sales at some providers are being held up because they can’t build the capacity fast enough.

- This means that when the correction comes, it won’t be as deep or as long as the internet correction, but it will still be pretty painful, and I expect to see a number of players go out of business and be acquired.

- This means that it is the picks and shovels of the AI boom where the most gains will be experienced.

- Here, I would highlight Nvidia, Samsung, Qualcomm, TSMC, MediaTek, SK Hynix, Micron and so on as the companies that are likely to benefit the most, and where I hold positions in Qualcomm and Samsung

- I would also highlight nuclear power as I have described above.

- It’s the high-flying LLM-creators that I would want to avoid.